How to Move from RPA to AI Agents: Enterprise Migration Guide (2026)

RPA hit a ceiling. AI agents handle the exceptions, judgment calls, and cross-system decisions that bots can't. Here's the practical enterprise guide to making the transition.

You invested in RPA. It delivered on its promise for the work it could handle. Bots automated the predictable, screen-based, repetitive tasks that used to eat hours of human time. Data entry, form filling, structured document processing. Real value for those use cases.

But you've also noticed something. The processes you most wanted to automate, the ones with the highest business impact, are still manual. Customer onboarding has too many edge cases. Compliance monitoring requires judgment. Sales research needs interpretation, not just data collection. Support triage involves ambiguous requests that don't fit any script.

You're not alone, and this isn't a failure of your RPA implementation. It's the category's structural ceiling. RPA follows scripts. Your most valuable processes need decisions.

This guide covers why enterprises are moving from RPA to AI agents, what the difference actually means in practice, how to evaluate whether it's the right move for your organization, and the practical path to getting there.

Part 1: Why RPA hit a ceiling

Understanding the limitation clearly is the first step. Not to criticize RPA, but to recognize what it was designed for and where it stops.

What RPA does well

RPA automates screen-level tasks. Software robots record and replay human actions: clicking buttons, filling forms, copying data between applications. For high-volume, fully predictable processes with stable UIs and zero variation, RPA delivers real efficiency. This isn't trivial. Enterprises have saved meaningful time and cost on data entry, invoice processing, and system-to-system data transfers.

Where it stops

Exceptions. When something falls outside the predefined script (ambiguous input, new data format, unexpected edge case, situation requiring judgment), the bot stops and routes to a human. For most enterprise processes, these exceptions represent 30 to 50% of the work. That's usually the work with the highest business impact.

Maintenance. Bots interact with application UIs. When a UI updates (a button moves, a field is renamed, a layout changes), every bot touching that application breaks. Multiply this across dozens of bots and dozens of applications updating independently, and maintenance costs grow faster than automation value. Many enterprises report spending more on keeping bots alive than the bots save.

Scope. Most RPA deployments automate 5 to 15% of originally targeted processes. Not due to poor execution, but because the remaining processes involve ambiguity, cross-system reasoning, conversations, and judgment that screen-level scripts structurally can't handle.

Scaling. Adding more bots doesn't fix these problems. It amplifies them. More bots means more maintenance, more exception queues, more brittle dependencies on screen interfaces that change without warning.

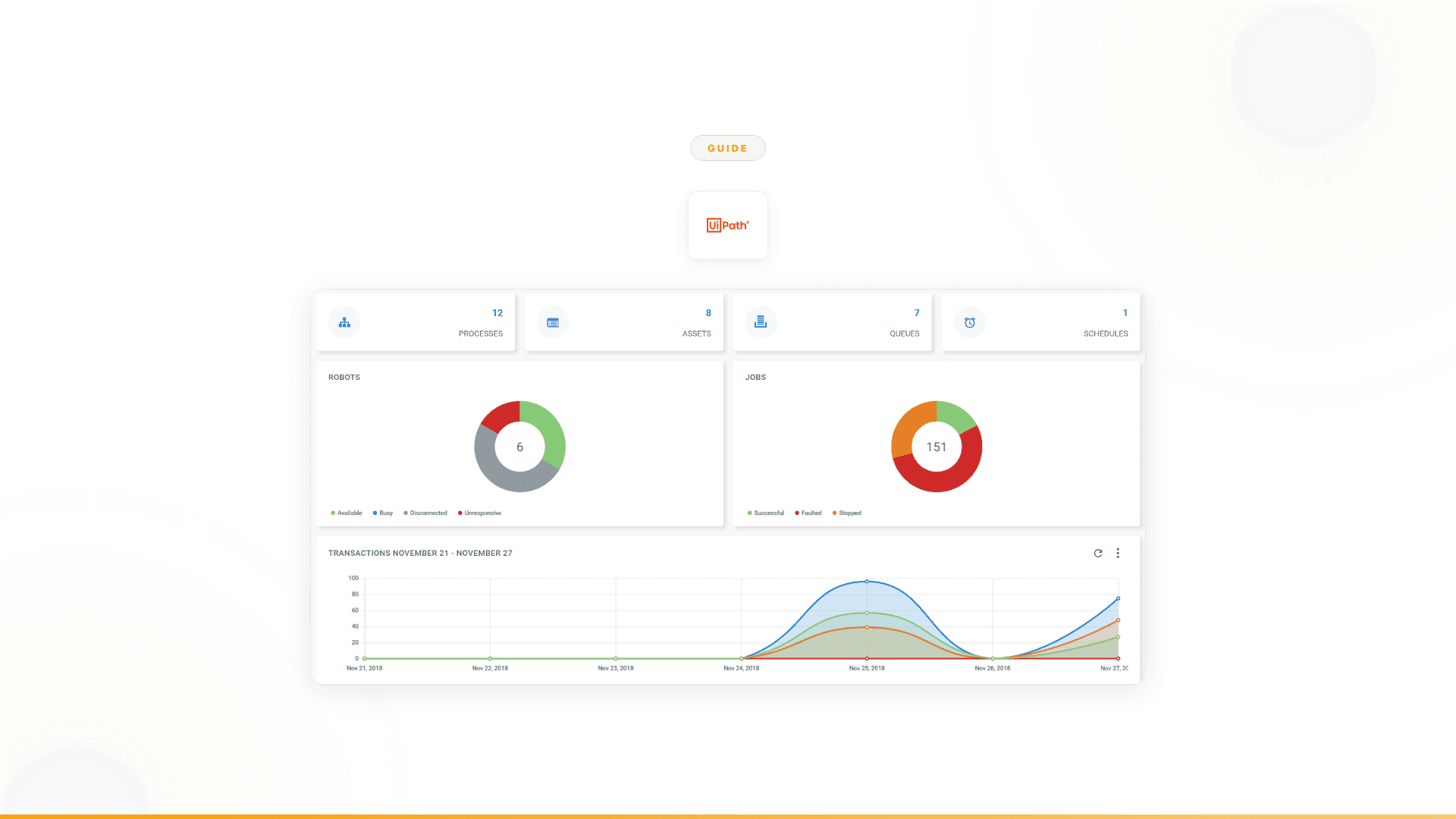

The AI additions don't change the foundation

UiPath, Automation Anywhere, and other RPA vendors are adding AI features. Agent Builder, AI Agent Studio, self-healing bots, document AI. These are real investments. But adding intelligence on top of a screen-level automation foundation is architecturally different from building on intelligence from the start. The underlying scripts are still rule-based. When the rule breaks, the AI layer inherits that brittleness.

This matters. It's the difference between a car with a navigation system bolted on (the car still can't change its own route) and a self-driving car where intelligence is the foundation (the vehicle reasons about what to do next).

Part 2: What AI agents actually do differently

"AI agents" gets used loosely. Here's what it means in the context of replacing RPA for enterprise work.

Agents reason about business logic, not screen actions

An RPA bot automates a customer onboarding process by clicking through five applications in sequence: check CRM, verify identity, validate coverage, create account, send confirmation. If any step deviates from the script, the bot stops.

An AI agent automates the same process by understanding the purpose: validate this customer, check their eligibility, create their account, handle any issues. When a customer submits ambiguous information, the agent interprets it. When a system returns unexpected data, the agent reasons about what it means. When an edge case appears, the agent makes a decision within guardrails or escalates with full context. The agent understands why each step matters, not just what buttons to click.

Agents handle exceptions instead of routing them

This is the fundamental shift. RPA's exception-handling model is: stop, route to human, wait. The human resolves the exception, the bot continues. For every exception. At scale, this means enterprises staff exception queues that handle the work bots can't, which is often the majority of the work.

AI agents handle exceptions autonomously. When a customer's request is ambiguous, the agent holds a conversation to clarify. When data doesn't match expectations, the agent reasons about the discrepancy. When a judgment call is needed, the agent makes it within defined guardrails or escalates with full context about what it tried, what it found, and why it's uncertain. The human gets a clean escalation, not a raw exception dump.

Agents connect through APIs, not screens

RPA bots interact with application UIs. This creates the maintenance problem: every UI change breaks the bot. AI agents connect through APIs. 4,000+ integrations in the case of Nexus. When an application updates its interface, the API stays the same and the agent keeps working.

This isn't just about reliability. API-level integration means agents can access data and execute actions that aren't available through any screen. System-level data, real-time analytics, bulk operations, cross-system queries. Agents operate at a layer that screen automation can't reach.

Agents are built by business teams, not developers

RPA typically requires dedicated developers or an RPA Center of Excellence. Business teams identify what needs automating, then wait for technical resources to build and maintain the bots. This creates a bottleneck that slows automation to the speed of the developer queue.

AI agents shift ownership to the business teams who understand the workflows. At Lambda, their sales intelligence agent was built by the Head of Sales Intelligence, not an engineer. At Orange, the customer onboarding agents were built by the business team, not IT. The people who understand the problem build and own the solution.

Part 3: How to evaluate whether AI agents are right for you

Not every process needs an AI agent. Here's a practical framework for deciding what stays on RPA and what moves to agents.

Audit your current RPA deployment

Start with three questions about your existing automation:

1. What's your exception rate? For each automated process, what percentage of transactions require human intervention? If it's below 10%, your bot is well-suited for the work. If it's above 30%, the bot is handling the easy part and humans are handling the hard part. That's where agents deliver the most value.

2. What's your maintenance cost as a percentage of automation value? Calculate what you spend annually on maintaining bots (developer time for fixes, testing after UI changes, monitoring, exception queue staffing) versus the value those bots deliver. If maintenance exceeds 40% of value, the RPA approach is becoming structurally unsustainable for those processes.

3. What processes did you target but never automate? Most enterprises have a backlog of processes they identified as automation candidates but deemed "too complex" for RPA. Too many exceptions. Too much ambiguity. Too many judgment calls. That backlog is your highest-value opportunity for agents.

The decision matrix

| Process characteristic | Keep on RPA | Move to AI agents |

|---|---|---|

| Fully predictable, zero variation | Yes | Not needed |

| Stable UI, rarely changes | Yes | Not needed |

| Exception rate below 10% | Yes | Not needed |

| Exception rate above 30% | No, agents handle better | Yes |

| Requires interpreting ambiguous inputs | No, bots can't | Yes |

| Requires judgment calls or decisions | No, bots can't | Yes |

| Involves conversations with users | No, bots can't | Yes |

| Spans multiple systems with cross-system logic | Partially | Yes, with API integrations |

| High maintenance cost from UI changes | Move to agents | Yes, API-level = no UI dependency |

| Legacy system with no API access | Yes (screen-level is the only option) | Not without API access |

| "Too complex" backlog items | RPA couldn't handle | Primary agent use case |

Start with the highest-impact gap

Don't try to replace your entire RPA program at once. Identify the single process where the gap between what bots handle and what the business needs is largest. Usually, it's the process with the highest exception rate, the most human intervention, and the biggest revenue or compliance impact.

For Orange, it was customer onboarding. For Lambda, it was sales intelligence. For a major European telecom, it was support triage. Each started with one high-impact use case, proved the value, and expanded from there.

Part 4: The practical migration path

Phase 1: Identify and quantify (Week 1-2)

Map the gap between what's automated and what needs to be. Focus on:

- Exception queues: Where are humans handling the work that bots route to them? What does that cost in time and money?

- Manual high-value processes: Which processes did you target for RPA but couldn't automate? What's the business impact of keeping them manual?

- Maintenance burden: Which bots break most often? Which applications cause the most bot failures when they update?

The output is a prioritized list of opportunities ranked by business impact, not by technical ease. The hardest processes for bots are usually the most valuable for agents.

Phase 2: Proof of concept (Week 2-6)

Start with one process. The selection criteria:

- High exception rate (30%+ of work handled by humans)

- Measurable outcome (revenue, cost savings, time saved, compliance improvement)

- Multiple systems involved (cross-system coordination is where agents shine)

- Business team willing to own it (not a delegated IT project)

A proper POC isn't a demo. It's a production deployment with measurable results. At Nexus, every engagement starts with a 3-month proof of concept tied to specific outcomes. Forward Deployed Engineers embed with your team and handle integration complexity so you're not figuring out a new platform alone.

Orange's POC: 4 weeks from start to production agents handling customer onboarding autonomously. 50% conversion improvement. ~$6M+ yearly revenue. 90% autonomous resolution. 100% team adoption.

Phase 3: Measure and compare (Week 6-12)

After the POC is running in production, measure the same things you'd measure for RPA, plus the dimensions that matter for agents:

- Autonomous resolution rate: What percentage of transactions does the agent handle without human intervention? (Orange: 90%)

- Exception handling: How does the agent handle the cases bots route to humans? Does it resolve them, escalate with context, or need improvement?

- Maintenance cost: How much time do you spend maintaining the agent versus maintaining bots for similar work? (Agents operate at API level, so UI changes don't cause breakage)

- Business outcome: Revenue impact, cost savings, compliance metrics, customer satisfaction. (Lambda: $4B+ pipeline. Orange: ~$6M+ yearly revenue. European telecom: 40% support volume freed)

- Team adoption: Are the people who interact with the agent actually using it? (Orange: 100% adoption)

Phase 4: Expand strategically (Month 3+)

Once the first agent proves value, expand to adjacent processes. The pattern that works:

Within the same department first. If sales intelligence delivered value, the next agents handle related sales processes (pipeline monitoring, account research, lead qualification). The team already trusts the approach.

Then across departments. If customer onboarding worked, support triage is a natural next step. Both involve customer interactions, exceptions, and cross-system coordination.

Let business teams lead. Each department identifies their highest-impact manual processes and builds agents for them. With Forward Deployed Engineer support and a proven platform, new agents deploy in days to weeks, not months.

The European telecom followed this pattern. Started with support. Expanded to compliance, registration, and other functions. A dozen agents in production. 40% of support volume freed across millions of interactions.

Part 5: What to look for in an agent platform

Not all "AI agent" platforms are equal. If you're evaluating options, here are the dimensions that matter.

Architecture: agent-first vs. AI-on-RPA

Some vendors are adding AI to RPA (UiPath's Agent Builder, Automation Anywhere's AI Agent Studio). Others built agent-first (Nexus). The difference shows up in how the platform handles exceptions, connects to systems, and maintains itself over time. AI on top of screen-level automation inherits the brittleness underneath.

Deployment model: platform vs. solution

A platform gives you software and leaves you to figure it out. A solution includes the expertise to make it work. Nexus embeds Forward Deployed Engineers with your team from day one. They handle integration complexity, agent design, and change management. This matters because deploying AI at scale is 10% technology and 90% organizational change.

Who builds and owns the agents

If the platform requires AI engineers or dedicated developers, you've recreated the RPA Center of Excellence bottleneck with a different technology. Look for platforms where business teams build and own the agents. The people who understand the workflow should control the automation.

Integration depth

Count the integrations, but also check the depth. Can agents pull data, make decisions, and execute actions across your CRM, ERP, communication tools, and custom systems? Nexus offers 4,000+ API-level integrations and deploys across Slack, Teams, WhatsApp, email, phone, and web.

Governance by design

Enterprise processes need audit trails, decision traceability, and compliance. Look for platforms where governance is built into how agents work, not layered on afterward. Every Nexus agent decision is logged and explainable. When it approves, you can see why. When it escalates, the human gets full context about what the agent tried, what it found, and where it's uncertain.

Proof before commitment

RPA taught enterprises an expensive lesson about buying platforms based on projected ROI. Look for vendors that prove value before you commit. Nexus starts every engagement with a 3-month POC tied to specific outcomes. 100% POC-to-contract conversion rate, because the results are visible before the commitment.

Part 6: Common concerns (and honest answers)

"We've invested millions in RPA. Is that wasted?"

No. The investment isn't wasted for the processes RPA handles well. Stable, predictable, screen-based processes with low exception rates and stable UIs. Those bots can keep running. The question is whether those processes represent the full scope of what you need to automate. The processes still manual, the ones in your "too complex for RPA" backlog, are where agents deliver value. You're adding intelligence for the work bots can't reach, not discarding what already works.

"Our RPA vendor says they're adding AI agents. Can't we just wait?"

You can. But understand the architectural distinction. UiPath adding Agent Builder on top of RPA is AI augmenting screen-level automation. Nexus is AI as the foundation with process execution built on top. The results differ because the starting point differs. When something unexpected happens, UiPath's agentic features are still constrained by the underlying bot architecture. Nexus agents reason from the ground up.

UiPath's CEO described agentic automation as "act two," an implicit acknowledgment that "act one" (predefined scripts) reached its limits. The question is whether adding act two to act one's foundation produces the same result as starting from act two directly.

"How do we get buy-in from leadership that already invested in RPA?"

Frame it as expansion, not replacement. "We automated 15% of targeted processes with RPA. Here's the 85% we couldn't reach because those processes need judgment, not scripts. AI agents handle that 85%. We're not throwing away what works. We're reaching the work that automation structurally couldn't touch."

Then prove it with a POC. Measurable outcomes in 4 to 6 weeks are more persuasive than any pitch deck.

"What about security and compliance?"

This is a legitimate concern, and it should be a hard requirement. Nexus is SOC 2 Type II, ISO 27001, ISO 42001, and GDPR certified. Every agent decision is logged with full audit trails and decision traceability. Role-based access controls who can build, modify, and deploy agents. For regulated industries, this governance is built into how agents work, not added later.

"How long does the transition actually take?"

First production agent: 2 to 6 weeks with Forward Deployed Engineer support. That includes integration, agent design, testing, and deployment. Orange went from kickoff to production agents in 4 weeks. Lambda deployed in days.

Full program expansion depends on scope, but the pattern is iterative: prove value on one process, expand to adjacent processes, then scale across departments. Most enterprises see the first measurable results within the POC period.

The bottom line

RPA delivered value for what it was designed to do. But most enterprises bought it expecting process transformation, and the category structurally can't deliver that for processes requiring judgment, exceptions, and cross-system decisions.

AI agents aren't a better version of RPA. They're a different approach. Agents reason about business logic instead of following scripts. They handle exceptions instead of routing them. They connect through APIs instead of screens. They're built by business teams instead of developers.

The enterprises that have made this transition share a consistent pattern:

- Orange: From chatbot with 27% drop-out to autonomous agents with 90% resolution, ~$6M+ yearly revenue, 4-week deployment, 100% adoption.

- Lambda: From rigid automation that broke on every change to agents monitoring 12,000 accounts, $4B+ pipeline, 24,000+ hours of research capacity annually. Built by a non-engineer.

- European telecom: From bots handling the predictable fraction to agents handling the full 100%. A dozen agents. 40% support volume freed. 12-week deployment.

They didn't replace RPA because it was bad. They moved to agents because the work that matters most needed intelligence, not scripts.

Worth exploring?

Every Nexus engagement starts with a 3-month proof of concept tied to measurable outcomes. Forward Deployed Engineers embed with your team from day one. You see the results before committing. You can exit anytime.

100% of clients who started a POC converted to an annual contract. Every one.

See the full Nexus vs UiPath comparison -->

Related reading

Your next

step is clear

The only enterprise platform where business teams transform their workflows into autonomous agents in days, not months.