How to Deploy Multilingual AI Agents Across Markets (2026 Guide)

From single-market chatbot to multi-market autonomous agents. A practical guide to deploying AI that completes workflows across languages, regulations, and systems. Orange deployed across multiple European markets in 4 weeks.

Most enterprises start with a chatbot in one market and one language. It works. Support tickets go down. Response times improve. Leadership asks the obvious question: "Can we roll this out across all our markets?"

That's where things get complicated. Not because of language. Language is the easy part in 2026. LLMs handle translation natively. Most platforms support 50+ languages. Getting your AI to speak Portuguese or Japanese isn't the challenge it was in 2023.

The hard part is everything behind the language. Every market has different regulatory requirements. Different system configurations. Different compliance rules. Different process flows. Different escalation paths. A chatbot that answers questions in French is useful. An agent that completes the entire onboarding workflow in France, following French regulations, integrating with French systems, routing exceptions to the right French-speaking team, that's a multilingual operation. Those are different problems.

This guide covers the practical path from single-market chatbot to multi-market autonomous agents. What changes between markets. What doesn't. Where enterprises get stuck. And what it actually takes to deploy AI that works across languages, regulations, and systems.

Why multilingual deployment is harder than it looks

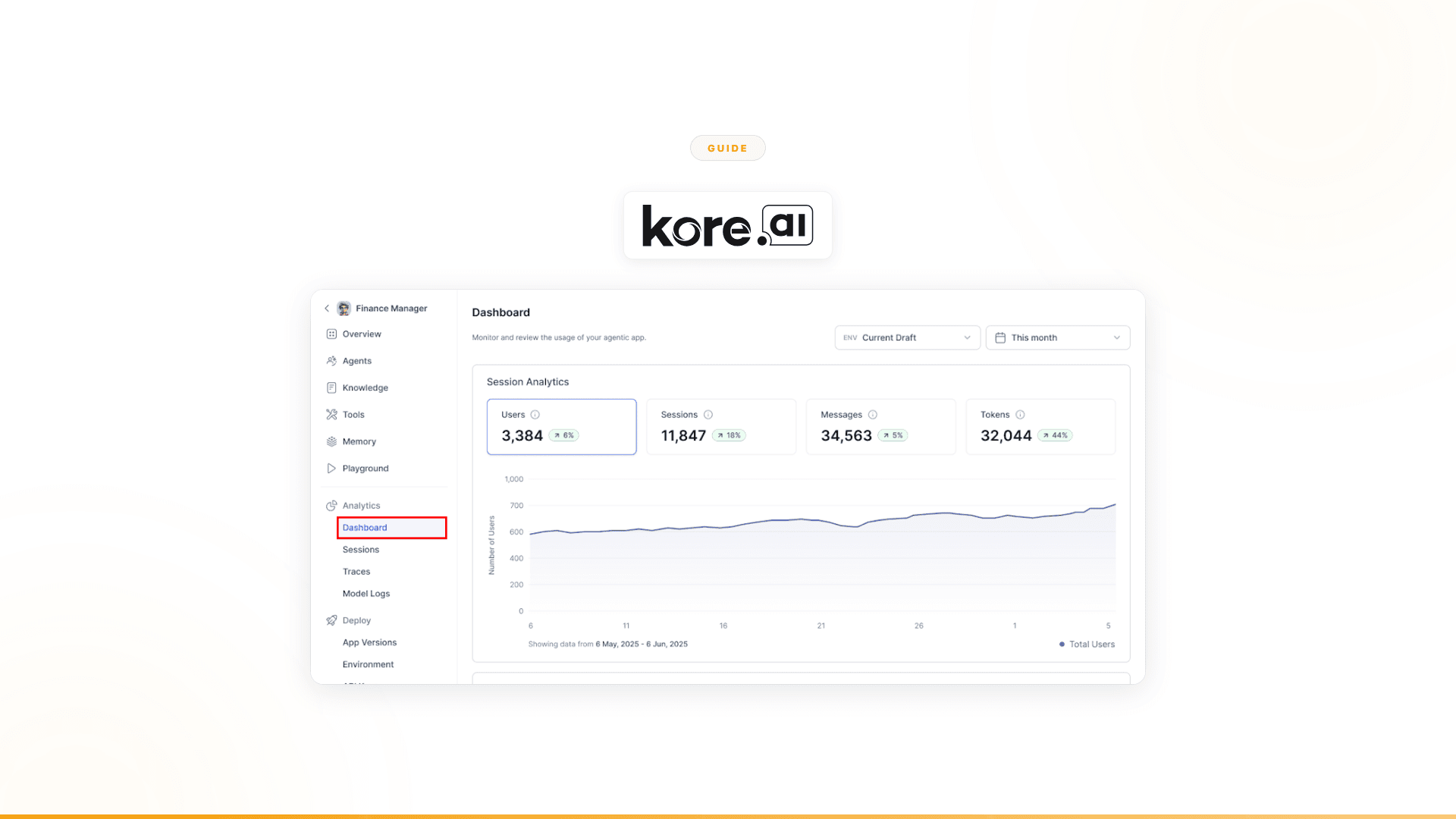

The conversation layer scales easily. Add a language to your chatbot. Train a few intents. Localize the responses. Done. Platforms like Yellow.ai (135+ languages), Kore.ai (120+), and others have made this straightforward.

The workflow layer doesn't scale the same way. Here's why.

Regulatory variation

A customer onboarding workflow in Germany follows German data protection requirements (GDPR plus national implementation). The same workflow in Brazil follows LGPD. In India, it's DPDP Act. These aren't just different privacy policies. They affect what data you can collect, how you validate identity, what you're required to disclose, how long you can store information, and what the customer can request at any point.

A multilingual chatbot that handles conversations in all three markets can be deployed in days. A workflow that handles onboarding compliance in all three markets requires understanding the regulatory specifics in each one.

System variation

Enterprise systems aren't uniform across markets. The CRM instance in France might run a different configuration than the one in the UK. The billing platform in Latin America might be a different vendor entirely. ERP modules differ. Internal tools differ. APIs differ. Authentication methods differ.

A chatbot talks to customers. It doesn't care which CRM is behind it. An autonomous agent that completes workflows interacts with all of these systems directly. Each market's system landscape is a different integration challenge.

Process variation

The same business process doesn't always work the same way across markets. Escalation paths differ. Approval chains differ. Exception handling differs. What constitutes a "standard" case in one market might be an edge case in another.

An agent that handles customer onboarding in France might route compatibility issues to a technical team in Paris. The same agent in Portugal routes to a different team structure entirely. The workflow logic adapts by market, not just the language.

Team and channel variation

Different markets prefer different communication channels. WhatsApp dominates in Latin America and parts of APAC. LINE in Japan and Thailand. WeChat in China. Teams and Slack internally. Email in some markets, messaging in others. The channels your AI uses to interact with customers and internal teams need to match what each market actually uses.

The five stages of multilingual AI maturity

Most enterprises move through these stages. Some skip ahead. Some get stuck at stage 2 for years. Understanding where you are helps determine what to build next.

Stage 1: Single-market chatbot

What it looks like: A chatbot deployed in one market, one language. Handles FAQs, routes to human agents, deflects basic support volume.

What it solves: First-line response times. Volume deflection on common questions. 24/7 availability in one market.

What it doesn't solve: The work behind the conversations. Workflow completion. Multi-market operations. Complex exception handling.

Typical tools: Any conversational AI platform (Yellow.ai, Ada, Intercom, Freshdesk). Or a simple chatbot built on Dialogflow or Botpress.

Stage 2: Multi-language chatbot

What it looks like: Same chatbot, now deployed in 3-10 languages. Conversations happen in the customer's language. Routing rules might vary slightly by language/market.

What it solves: Multilingual first-line support. Broader customer coverage. Consistent conversation quality across languages.

What it doesn't solve: Still the same structural limitation. The chatbot answers questions in more languages. The operational work behind those answers is still manual, and now it's manual across multiple markets with different requirements.

Typical tools: Yellow.ai, Kore.ai, Cognigy, or any platform with broad language support.

Where enterprises get stuck: Most enterprises plateau here for 1-3 years. The chatbot handles conversations across markets. Leadership sees deflection metrics improve. But customer satisfaction doesn't change proportionally because the real delays and errors happen in the workflow, not the conversation. The chatbot answers the question quickly. The process behind it takes days.

Stage 3: Single-market autonomous agent

What it looks like: An AI agent that doesn't just converse but completes an entire workflow in one market. Customer onboarding. Claims processing. Account updates. The agent collects data, validates it, makes decisions within guardrails, handles exceptions, and executes actions across systems.

What it solves: Workflow completion in one market. Dramatically faster processing. Fewer errors (no manual data entry). Consistent compliance. Reduced operational headcount on the specific workflow.

What it doesn't solve: Other markets still run the old way. The knowledge and configuration for this market's agent doesn't automatically transfer to other markets.

Key insight: This is the stage where the value inflection happens. The jump from chatbot (conversation) to agent (workflow) is a category change. Enterprises that reach this stage typically see order-of-magnitude improvements in the specific workflow the agent handles.

Stage 4: Multi-market autonomous agents

What it looks like: The agent architecture from Stage 3, deployed across multiple markets. Each market's agent handles the local workflow variation: regulatory requirements, system integrations, process flows, escalation paths. One platform. Multiple market-specific configurations.

What it solves: Workflow completion across markets. Consistent quality with local adaptation. Centralized governance with local execution. Scale without proportional headcount.

What it takes: This is the stage most enterprises are trying to reach and most can't get to with conversational AI platforms. The agent needs to handle not just language but regulatory, system, and process variation by market.

Stage 5: Cross-market agent orchestration

What it looks like: Agents across markets coordinate with each other. A customer interaction in one market triggers workflows in another. Compliance monitoring spans multiple jurisdictions. Data harmonization keeps systems aligned across markets. Agents don't just work in markets. They work across them.

What it solves: Cross-border operations. Multi-market compliance monitoring. Centralized reporting with local execution. Enterprise-level orchestration of market-specific agents.

What needs to change between markets

When deploying agents across markets, here's a practical breakdown of what changes and what doesn't.

What stays the same

- Agent architecture. The fundamental design, how the agent collects data, makes decisions, handles exceptions, and executes actions, is consistent across markets. You don't rebuild from scratch for each market.

- Platform infrastructure. Security, governance, audit trails, monitoring, and deployment pipelines are centralized. One platform. Multiple market configurations.

- Business logic framework. The structure of the workflow stays the same. A customer onboarding workflow in every market follows the same pattern: collect data, validate, check compliance, execute. The specifics differ. The skeleton doesn't.

- Integration architecture. How the agent connects to systems follows consistent patterns, even when the systems themselves differ by market.

What changes

| Component | What varies by market | Example |

|---|---|---|

| Language | Conversation language, document templates, notification text | French in France, Portuguese in Brazil, German in Germany |

| Regulatory rules | Data collection requirements, disclosure obligations, consent flows | GDPR implementation in Germany vs LGPD in Brazil |

| System integrations | CRM instance, billing platform, ERP configuration, internal tools | Salesforce in Europe, local CRM in Latin America |

| Process flows | Escalation paths, approval chains, exception handling, team routing | Technical team in Paris vs shared service center in Lisbon |

| Compliance checks | What gets validated, against which rules, with what documentation | Identity verification requirements differ by jurisdiction |

| Channels | Customer-facing and internal communication channels | WhatsApp in Brazil, web chat in Germany, LINE in Japan |

| Thresholds and rules | Decision boundaries, approval limits, SLA targets | Different credit thresholds by market |

The deployment playbook

Here's what a practical multi-market deployment looks like, based on what enterprises have actually done.

Step 1: Prove value in one market

Don't try to deploy across all markets simultaneously. Pick your highest-impact market. Deploy an agent that completes one high-value workflow end-to-end. Measure the results.

This isn't just about reducing risk. It's about building the organizational proof that AI can complete workflows, not just conversations. That proof is what gets buy-in for multi-market expansion.

What to measure:

- Workflow completion rate (not conversation deflection)

- Processing time reduction

- Error rate reduction

- Revenue impact or cost savings

- Team adoption rate

- Customer satisfaction on the specific workflow

Step 2: Document what's market-specific

Before expanding, catalog everything that varies by market for the workflow you've deployed. Regulatory requirements. System integrations. Process flows. Escalation paths. Compliance rules. This becomes your market configuration template.

Most enterprises discover that 60-80% of the agent's logic is market-independent. The remaining 20-40% is market-specific configuration. Knowing exactly what that 20-40% consists of makes multi-market deployment predictable instead of chaotic.

Step 3: Build the market adaptation layer

The best multi-market deployments separate market-specific configuration from core agent logic. The agent's decision-making framework stays the same. Market-specific rules (regulatory requirements, system endpoints, process flows) are injected as configuration, not hard-coded into the agent.

This means deploying to a new market doesn't require rebuilding the agent. It requires configuring the market-specific layer: regulatory rules, system integrations, process variations, and language.

Step 4: Deploy market by market, fast

Once the first market is proven and the market adaptation layer is built, subsequent markets deploy in days to weeks, not months. The core agent exists. The configuration template exists. Each new market is a configuration exercise, not a development project.

Step 5: Centralize governance, localize execution

Governance (security, audit trails, compliance monitoring, decision traceability) is centralized. Execution (the actual workflow, adapted for each market's rules and systems) is local. One platform provides unified visibility across all markets. Each market's agents handle local requirements.

Orange: from single-market chatbot to multi-market agents in 4 weeks

The clearest production example of this playbook is Orange Group.

Before: Orange, a multi-billion euro telecom with 120,000+ employees, had a CX chatbot for customer interactions. It handled conversations. It had a 27% drop-out rate. Customer onboarding across multiple European markets involved human coordination across systems, compliance frameworks, and market-specific requirements in each country.

The shift: Orange didn't upgrade their chatbot. They deployed autonomous agents on Nexus that complete the entire customer onboarding workflow. Not conversations about onboarding. The actual onboarding: data collection, validation against market-specific regulations, compatibility checks, exception routing, and cross-system execution.

The deployment: Across multiple European markets and languages in 4 weeks. A business team built the agents, not engineers. A Forward Deployed Engineer from Nexus embedded with the team, handling integration complexity and ensuring adoption.

The results:

- 50% conversion improvement

- ~$6M+ yearly revenue

- 90% autonomous resolution

- 100% team adoption

- Multi-market deployment in 4 weeks

What made it work:

- Business team ownership. The people who understand the workflow built the agents. No dependency on engineering.

- Forward Deployed Engineer. A dedicated engineer embedded with the team to handle integration complexity and organizational change. Not a support ticket. A person.

- Platform-level market adaptation. One platform, multiple market configurations. Deploying to an additional market didn't mean rebuilding.

- Workflow-first design. The agents weren't chatbots with more features. They were designed around the work: collect, validate, decide, route, execute.

Common mistakes in multilingual AI deployment

Mistake 1: Treating translation as the hard part

In 2026, translation is a commodity. LLMs handle it natively. Spending months perfecting multilingual NLU intent libraries when the real bottleneck is workflow completion is optimizing the 10% while ignoring the 90%. If your multilingual conversations work but your multilingual workflows don't, language isn't your problem.

Mistake 2: Deploying all markets simultaneously

The instinct to "go global" from day one usually leads to slow, complex projects that don't deliver results in any market quickly enough to maintain executive sponsorship. Prove one market. Document the variation. Expand fast.

Mistake 3: Hard-coding market logic

Building separate agents for each market, with market-specific logic embedded in the core workflow, creates maintenance nightmares. When the core workflow changes, you update it in every market. Separate market-specific configuration from core logic. This is an architecture decision that pays off immediately at the second market and compounds from there.

Mistake 4: Ignoring the organizational layer

Technology deploys faster than organizations change. A multi-market AI deployment isn't just a technology project. It requires local teams to trust the agent, adjust their workflows, and adopt new processes. Forward Deployed Engineers exist precisely for this reason. The technology works on day one. Adoption takes deliberate effort. Nexus has a 100% POC-to-contract conversion rate because dedicated engineers handle the organizational change, not just the technology.

Mistake 5: Measuring conversations instead of workflows

Multilingual deflection rates are a vanity metric if the work behind those conversations is still manual. Measure workflow completion rate. Processing time. Error rate. Revenue impact. Those are the numbers that tell you whether your multilingual AI deployment is working.

What to look for in a multilingual agent platform

If you're evaluating platforms for multi-market agent deployment, here's what matters.

| Capability | Why it matters | Questions to ask |

|---|---|---|

| Workflow completion | The agent needs to finish the job, not just the conversation | Does the platform complete end-to-end workflows, or just conversations? |

| Market-specific configuration | Each market has different rules, systems, and processes | How does the platform handle regulatory and process variation by market? |

| Integration depth | Agents need to interact with local systems in each market | How many integrations? Can it connect to our specific systems in each market? |

| Business team ownership | The people who understand the workflow should build the agents | Can non-engineers build and modify agents? |

| Centralized governance | Security, compliance, and audit trails need to span all markets | Is there unified visibility across all markets? Full audit trails? |

| Deployment support | Multi-market deployment requires more than software | Is there dedicated engineering support for deployment and adoption? |

| Language coverage | The conversation layer needs to work in each market's language | How many languages? At what depth? |

The gap between multilingual conversations and multilingual operations

Most enterprises searching for "how to deploy multilingual AI agents" have already deployed multilingual chatbots. They've solved the conversation layer. They're searching because they've realized the conversation wasn't the bottleneck.

The bottleneck is the work. In every market. With every market's regulations, systems, and processes. That's the 90% that conversational AI doesn't reach.

Multilingual AI agents, real agents that complete workflows across markets, are a different category from multilingual chatbots. They require different architecture, different measurement, and different deployment approaches.

The enterprise that cracks this, that deploys AI completing workflows across markets with local adaptation and centralized governance, doesn't just save on support costs. It transforms how the entire business operates internationally.

Worth exploring?

If you're looking to move beyond multilingual chatbots to multilingual agents that complete workflows across markets, it might be worth seeing how Orange deployed across multiple European markets in 4 weeks, or how a major European telecom freed 40% of support volume with agents that handle compliance, registration, and data harmonization across millions of interactions.

Every Nexus engagement starts with a 3-month proof of concept tied to measurable outcomes. Forward Deployed Engineers embed with your team from day one. You see the results before committing. You can exit anytime.

100% of clients who started a POC converted to an annual contract. Every one.

See how Nexus compares to Yellow.ai -->

Related reading

Your next

step is clear

The only enterprise platform where business teams transform their workflows into autonomous agents in days, not months.